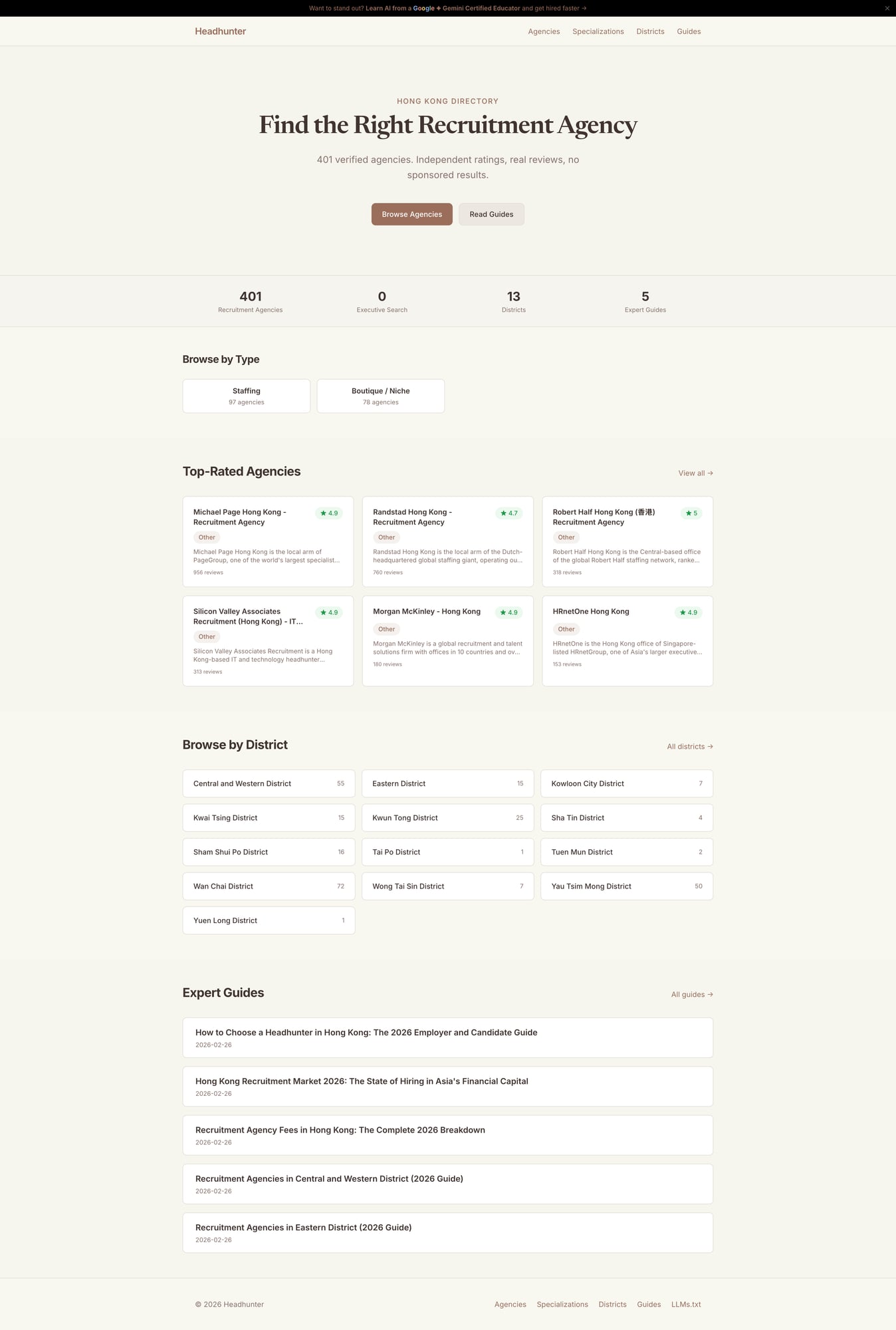

Headhunter.com.hk — AI Search Visibility Experiment

The problem

AI search engines are replacing traditional Google results. Nobody knows how to optimize for them. Meanwhile, premium .hk domains were expiring and available for registration.

What we did

Built a full-stack directory site as an SEO laboratory. 1,076 companies enriched from public data with AI-generated descriptions, service classifications, and review summaries. Knowledge vault architecture with atomic notes and wikilinks. DataForSEO keyword research. Eight service-based specialization pages. District-level content. LLMs.txt endpoint for AI crawlers.

Scope

Data pipeline, AI enrichment, knowledge architecture, SEO research, static site generation

Result

Live directory ranking for competitive keywords. 461 pages generated from structured data. Framework proven: one codebase serves 6 different verticals across different .hk domains. Research continues.

The Opportunity

September 2025. Two things happened at the same time.

First, I noticed that dozens of premium Hong Kong domains were expiring. Headhunter.com.hk, auditor.hk, renovation.hk, warehouse.hk. These are exact-match domains for real industries. The kind that cost five figures in .com but were available for HKD 250 each.

Second, I was watching AI search engines eat traditional Google results. ChatGPT, Perplexity, Claude — they don’t just link to pages. They read them, understand them, and cite them. The rules of SEO were changing, and nobody had figured out the new ones yet.

So I registered six domains and started building.

The Hypothesis

Traditional SEO optimizes for Google’s ranking algorithm: backlinks, keyword density, page speed, schema markup. AI search engines care about something different: depth of knowledge, factual density, and structured relationships between entities.

A page that says “here are 10 recruitment agencies” is useless to an AI. A page that says “Michael Page Hong Kong is a Central-based recruitment agency specializing in executive search for financial services, with 4.9 stars across 350 reviews, operating from their Des Voeux Road office” — that’s citable.

The hypothesis: if you build a site that treats every company, every district, every specialization as a richly described entity with explicit relationships, AI search engines will prefer it over thin directory listings.

Data Enrichment

Building the Company Database

Starting point: 1,076 companies across Hong Kong’s recruitment industry, compiled from public business directories and registries.

Raw directory listings are worthless. “ABC Employment Agency, Mong Kok, 4.2 stars” tells you nothing. The enrichment pipeline transforms each listing into a rich, structured entity:

AI-powered classification. Each company’s publicly available information — website content, business descriptions, customer reviews — is processed through Claude to generate:

- A factual 2-3 sentence description (not marketing copy — what the company actually does)

- Service categories (Executive Search, Permanent Placement, Contract Staffing, HR Consulting, etc.)

- Industry specializations

- A review summary synthesizing customer feedback patterns

Quality filtering. Hong Kong’s recruitment industry has a unique challenge: hundreds of domestic helper placement agencies are listed alongside professional recruitment firms. Our classification pipeline separates them using service keywords, company name patterns (Chinese characters like 僱傭), and description analysis. A company where the majority of services relate to domestic helper placement gets filtered to a separate vertical.

Keyword Research

We used DataForSEO’s API to understand what people actually search for. Key findings for Hong Kong recruitment:

| Keyword | Monthly Volume | Competition |

|---|---|---|

| recruitment agency hong kong | 1,600 | Medium |

| headhunter hong kong | 260 | High |

| executive search hong kong | 260 | Medium |

| top 10 recruitment agencies | 210 | Medium |

This data drives content strategy. Every article targets a specific keyword cluster. Every district page targets “[district] recruitment agencies” long-tail queries.

Knowledge Vault Architecture

This is where the project gets interesting.

Most directories store data in a database and render it through templates. We took a different approach inspired by Zettelkasten and personal knowledge management: every entity is an atomic Markdown note with explicit relationships.

knowledge/headhunter/

companies/ → 689 notes (one per company)

districts/ → 18 notes (one per HK district)

stations/ → 97 notes (MTR stations with nearby companies)

industries/ → 22 notes (industry verticals)

facts/ → atomic facts with sources

articles/ → compiled from aboveA company note links to its district ([[Central and Western District]]), its specializations ([[Executive Search]]), and its MTR station ([[Central Station]]). A district note links back to all companies in it. An article about “Recruitment in Central” pulls facts from the district note, company notes, and market data notes.

Why this matters for AI search: When an AI crawler reads the Executive Search specialization page, it finds links to 199 individual company pages, each with unique descriptions. When it reads a company page, it finds links to the district, the specialization, and related companies. This interconnected structure is exactly what AI search engines need to build confidence in a source.

LLMs.txt

We generate an llms.txt file at the site root — a machine-readable summary of everything the site knows. This is a new convention (started by Anthropic) that helps AI search engines understand a site’s content without crawling every page.

The Engine: One Codebase, Six Verticals

The site runs on Astro (static site generator) with a SQLite database read at build time. A single SITE_SLUG environment variable controls which vertical to build. Same code, different data:

headhunter.com.hk— recruitment agenciesauditor.hk— audit and accounting firmsrenovation.hk— interior design and renovationwarehouse.hk— logistics and warehousingwater.com.hk— water serviceseducation.headhunter.com.hk— education sector

Each site builds in under 2 seconds and deploys to Cloudflare Pages at zero cost. 461 pages for headhunter, ~730 across all verticals.

Analytics

Self-hosted Umami analytics (privacy-first, no cookies, GDPR-compliant) tracks visitor behaviour without sending data to third parties. Each vertical has its own dashboard. Daily automated reports sent via Telegram.

What We’re Learning

This project is an ongoing experiment. Some early observations:

AI search engines value entity density. A page with 50 companies, each with a unique 2-sentence description, outperforms a page with 50 company names and addresses.

Cross-linking works. Pages that reference other pages on the same site get cited more often by AI search tools.

Structured data beats prose. AI crawlers parse tables, lists, and schema markup better than paragraphs.

Domain authority still matters. An exact-match .hk domain with genuine local content signals relevance to both traditional and AI search.

The enrichment quality ceiling is real. AI-generated descriptions are only as good as the source data. Companies without websites get thin descriptions. The next phase involves deeper research for top-tier firms.

Tech Stack

- Framework: Astro 5 (static site generation, <2s builds)

- Data: SQLite via better-sqlite3 (15MB, 18 tables, 7,448 rows)

- Content: Markdown with remark-wikilinks (knowledge vault → HTML)

- Styling: Tailwind CSS v4 with per-site CSS variables

- Deploy: Cloudflare Pages (zero cost, global CDN)

- Analytics: Self-hosted Umami on Synology NAS

- AI: Claude for enrichment, classification, and content generation

- Research: DataForSEO API for keyword data

- Enrichment: Python pipeline (batch processing, data normalization)

Current Status

The experiment continues. Next phases:

- Deeper enrichment for top 100 companies per vertical

- A/B testing different content structures for AI search performance

- Expanding to more Hong Kong verticals as domains become available

- Building an admin panel for non-technical operators to manage content

Want something like this?

Start a project