education

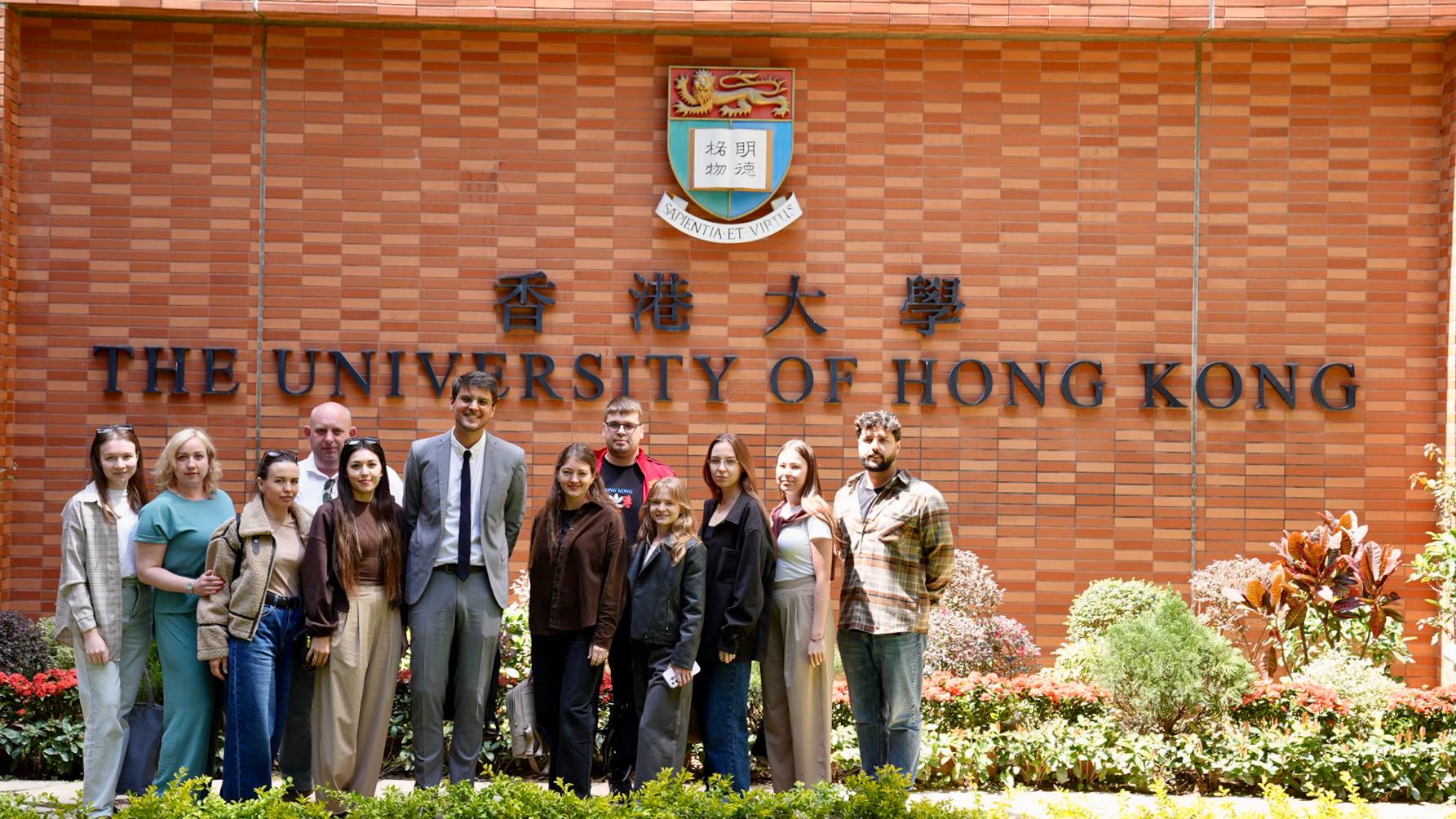

AI for Scientific Research: HKU Masterclass Recap

March 14, 2026

Hong Kong University. Top tier in Asia.

I walked in expecting to teach. Left realizing I should have been taking notes.

These aren’t people reading about science on the internet. They’re the ones producing it. Fiber optics. Radiobiology. Agricultural chemistry. Aerospace engineering. The kind of work where getting a formula wrong doesn’t just break your code. It breaks your experiment.

What we covered

The masterclass had one thesis: AI is a lever. Knowledge is the fulcrum. Without the right books, AI multiplies zero.

I opened with a personal story. My grandfather, Prof. German Mozhaev, wrote books on satellite orbital systems. Dense. Full of formulas I never understood. When friends came over from school, we’d flip through those pages and pretend to be geniuses.

Decades later, I built an iOS app with 14 differential equations. Not because I suddenly became a physicist. Because I found the right books and had the right tools to extract, index, and apply what was in them.

The pipeline: Deep Research finds the canonical sources. MinerU extracts LaTeX from scanned PDFs. A RAG system indexes 5,000+ chunks. Obsidian connects ideas across domains. And the AI agent orchestrates the whole thing.

The trap

Here’s the part that matters most: AI lied to me.

Early in the project, the model generated a plausible formula for singing bowl harmonics: f_n = f₀ · n^1.8. Looked reasonable. It was completely wrong. Real bowls don’t follow a power law. Each geometry has measured ratios from laser vibrometry (Inacio, 2006).

Without the book, I would have shipped garbage. The model was confident. The physics was wrong. This is why “books first, AI second” isn’t just a nice principle. It’s the only approach that works for serious research.

The people in the room

This is what made the session special. Every person brought a domain where this pipeline applies directly:

Daniiar Baianov works in aerospace engineering and industrial mentorship. The ray-transfer matrices from photonics? Same mathematical structure he uses for trajectory analysis.

Konstantin Vagin is in radiobiology. When your research involves ionizing radiation models, you can’t afford hallucinated decay constants.

Galina Kalinina studies communication dynamics of international students. Qualitative research, but the knowledge graph approach (connecting concepts across papers with wikilinks) maps perfectly to discourse analysis.

Marina Mikhailova works on agriculture and advanced fertilizers. Chemical reaction kinetics, soil models. Same extraction pipeline: find the canonical papers, OCR the formulas, index them, and let the agent answer questions grounded in real data.

Ekaterina Pashina teaches history and researches AI in education. She got the meta-level immediately: the masterclass itself was an example of what she studies.

Ekaterina Platoshkina works in human physiology and sports science. Biomechanical models, performance curves. Data-heavy, formula-heavy. Perfect use case.

Elena Soloveva specializes in botanical extraction. Chemical compound modeling, solvent optimization. She asked the sharpest question about validation: “How do you verify the formula is correct if you’re not a domain expert?” Answer: you go back to the book.

Danila Kharitonov works in fiber optics, silicon photonics, and sensorics. We actually built a Manim animation of the key Saleh & Teich equations live during the session. Snell’s law, numerical aperture, V-parameter, group velocity dispersion. His reaction when the formulas animated on screen told me everything.

Landysh Gabitova brought energy and great questions throughout.

What they left with

Three things:

- A pipeline they can use Monday. Deep Research + MinerU + local RAG. Not a concept. Working tools.

- A healthy skepticism. Every formula the model generates needs a source. If it can’t cite the book, it’s probably making it up.

- A demo they can show their colleagues. Manim renders animated formulas from LaTeX. The “wow” factor helps when you’re trying to convince your department to take AI seriously.

The live demos

Two demos that landed the hardest:

Keynote automation. I described a slide and the agent created it in Apple Keynote via AppleScript. 22 slides in 30 seconds. The room went quiet.

Manim video generation. Described a formula, the agent wrote the Python scene, rendered a 1080p60 video with animated LaTeX. Three sciences (acoustics, cymatics, physarum simulation) visualized in one 37-second clip. This one got actual applause.

Manim-rendered: acoustic resonance, cymatics patterns, and physarum transport networks. All formulas extracted from indexed books via RAG.

Takeaway

The best part of teaching is learning what you don’t know. Nine researchers showed me nine domains where the same pipeline applies. The math is different. The books are different. The workflow is identical.

Find the canonical source. Extract the knowledge. Index it. Let the agent work with real data instead of hallucinated confidence.

Special thanks to @istanbei for organizing this. The room, the people, the energy. Couldn’t have happened without it.

If you want to run a similar session for your department or research group: get in touch.

Interested in this?

Learn about AI Education →